Why Edge Computing Changes Every Company's Playbook

The cloud taught CFOs that renting outcomes beats owning infrastructure. Physical AI has a different opinion. When a machine making real-time decisions in a physical environment loses its connection, the cost is not an inconvenience. It is a measurable, per-hour number that belongs on a balance sheet. Edge computing moved from an IT footnote to a business continuity question, and business continuity is a CFO question.

The cloud taught companies a useful lesson: stop buying infrastructure, start renting outcomes. Pay as you go. No hardware to depreciate. Flex up when you need it, flex down when you don't. For most of the last decade, that was the right answer.

Physical AI has a different opinion.

The Question Most Operators Are Asking Wrong

"Cloud versus edge" gets framed as an IT decision. Where does the data live? How does it move? Who manages the servers? These are legitimate questions. They are also the wrong ones to lead with.

The right question is: What does it cost when this system goes down?

For a SaaS company, the answer is an inconvenient afternoon. For a company running Physical AI, a warehouse robot navigating a live floor, a robotic arm on a manufacturing line, a quality control system running at speed, the answer is measurable in tens of thousands of dollars per hour. Unplanned downtime in manufacturing and logistics doesn't round to zero. It rounds to a board conversation.

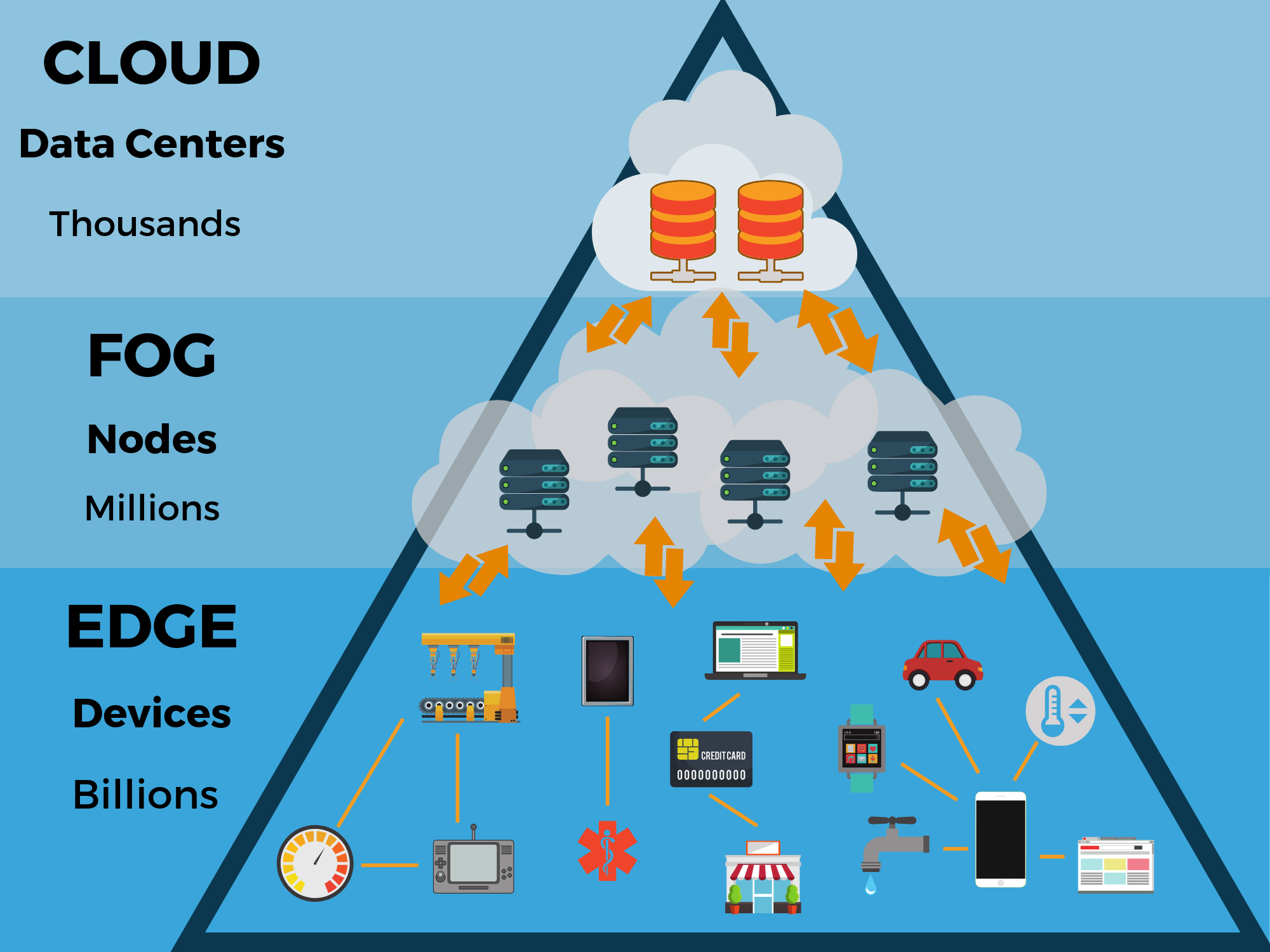

Cloud infrastructure requires sending data to a remote server and waiting for a response. For software on a screen, that wait is invisible. For a machine making real-time decisions in a physical environment, it is the difference between a functioning operation and a stopped one. This is why edge computing, processing data locally, on-device or on-premises, has moved from an infrastructure footnote to a business continuity question. And business continuity is an operator question, not an IT one.

What Changes on the Balance Sheet

Cloud-first thinking trained companies to prefer operating expenditure over capital expenditure. No commitments, no depreciation, no maintenance cycles. Edge deployments bring physical assets back into the picture, local compute hardware, ruggedized devices, on-premises servers, and with them, capital planning conversations that haven't been part of the infrastructure budget in years.

For companies deploying Physical AI at scale, this is not a rounding error. It is a strategic infrastructure investment that needs to be evaluated the way you'd evaluate a fleet or a facility. The operator who keeps squeezing it into a SaaS subscription mental model is going to misprice it every time.

The cloud isn't going away. Most mature Physical AI deployments run both, edge for real-time decisions, cloud for storage, model training, and analytics. The question isn't which one wins. The question is: Where does each workload actually belong, and are we paying for that correctly?

Pricing Risk Is the Job

Here is the thing about operational downtime risk that rarely shows up in a cloud ROI model: It has a number. It is not theoretical. The operator who can quantify what an hour of stopped production costs, weigh it against the annualized cost of edge infrastructure that prevents it, and present that trade-off clearly, that operator is doing the job.

The operator who treats edge computing as someone else's problem until the outage happens is not being conservative. They are deferring a decision that already has a price tag. They just haven't looked at it yet.

Pricing risk correctly is the whole game. Edge computing just made it harder to avoid.